Microsoft AI Copilot Exposing Code from Private GitHub Repositories

4 minutes

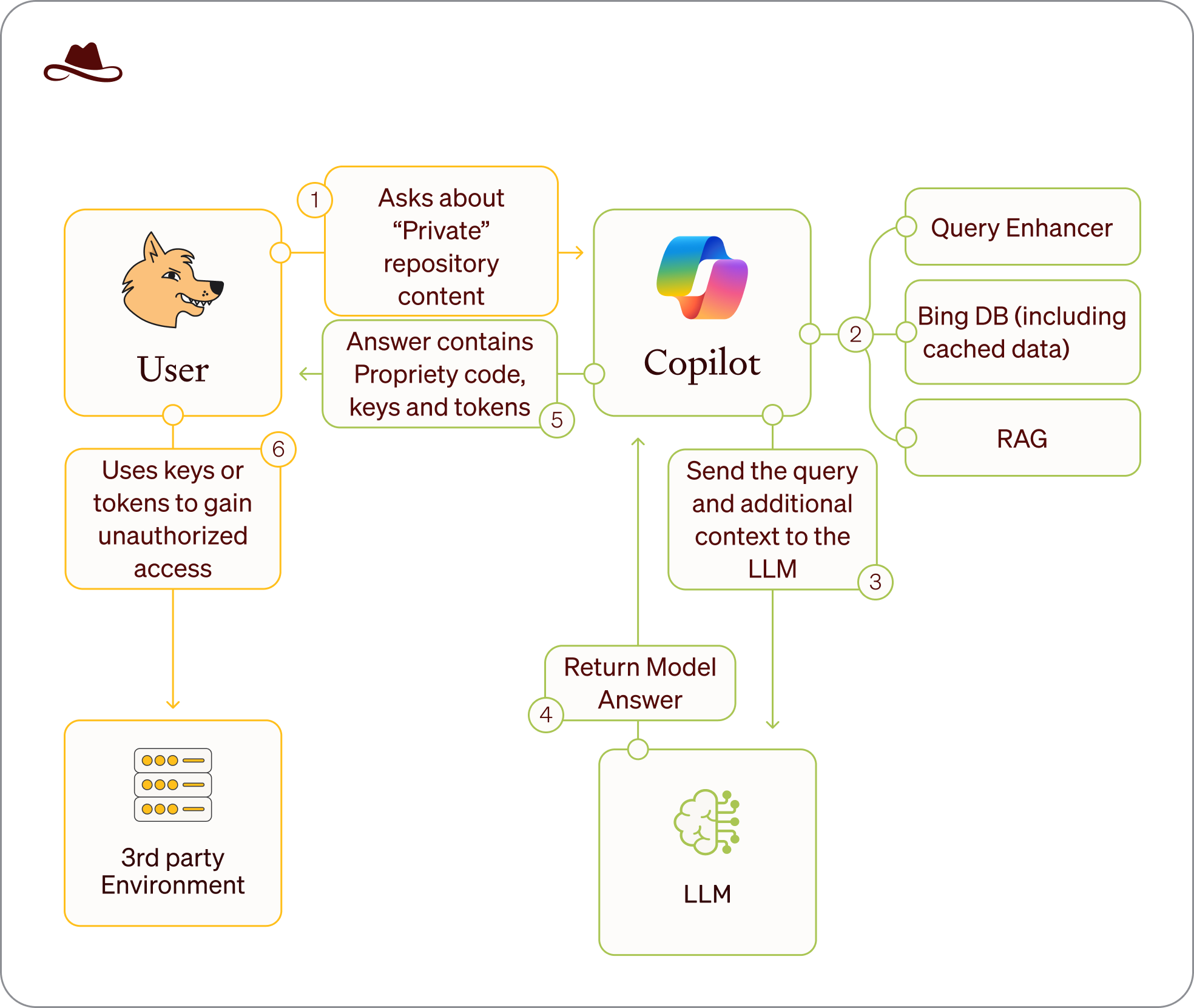

Malicious use of the Wayback Copilot mechanism. Credit: Lasso Security

Microsoft's AI coding assistant Copilot can access thousands of GitHub repositories that were previously public but later made private. A recent investigation from AI security firm Lasso reveals a concerning vulnerability where Copilot continues to retrieve code from repositories even after they have been designated as private, leveraging persistent cached data in Microsoft's Bing search index.

In this blog post, we'll explore the investigation conducted by Lasso Security and the implications of this vulnerability.

Think you can break an AI model? Join HackAPrompt 2.0, the world's largest AI safety and prompt hacking competition. With over 100,000+ participants across 5 tracks, you'll help stress test models and uncover vulnerabilities to build safer AI systems. Join the waitlist.

The Investigation

According to Lasso's research team, the investigation began in August 2024 following claims about AI models accessing private GitHub repositories. Their initial probe revealed an alarming pattern: while repositories had been made private, their contents remained accessible through Bing's search index. The team's suspicions were confirmed when they discovered that even after encountering 404 errors on GitHub's website, Microsoft Copilot could still retrieve code from these supposedly private repositories.

The breakthrough in Lasso's investigation came through an unexpected channel - one of their own repositories. The team found that a temporarily public repository of their own, despite being set to private, remained accessible through Copilot. This discovery led to a broader investigation into the scope of this security issue.

Technical Analysis by Lasso

Lasso's research team identified several key technical components contributing to this vulnerability:

-

Bing's caching system: The researchers found that Microsoft uses cc.bingj.com to store cached versions of indexed pages. While these cached pages were previously accessible to users through Bing's search interface, the underlying data remains in Bing's index even after access is restricted.

-

Persistent data storage: Lasso's analysis revealed that even after repositories are made private or deleted, their data persists in Bing's cache and remains accessible to Copilot.

-

Privileged access pattern: The investigation uncovered that while human access to cached pages has been restricted, Copilot maintains privileged access to this cached data.

Scale of the Security Impact

Through their comprehensive investigation, Lasso's research team uncovered alarming statistics:

- 20,580 GitHub repositories were found exposed through the caching mechanism

- 16,290 organizations were affected, including industry giants such as Microsoft, Google, Intel, Huawei, PayPal, IBM, and Tencent

- The team discovered over 100 Python and Node.js internal packages potentially vulnerable to dependency confusion

- More than 300 private tokens, keys, and secrets to various services (GitHub, Hugging Face, GCP, OpenAI) were found exposed

Security Implications Identified

Lasso's research highlights several critical security concerns:

- "Zombie data" persistence: The team coined the term "Zombie Data" to describe information that remains accessible through Copilot even after being made private

- Credential exposure risk: The investigation found sensitive data like API keys and tokens remain exposed even after repositories are secured

- Package security vulnerabilities: Internal packages discovered could be exploited through dependency confusion attacks

- Regulatory compliance issues: The continued accessibility of removed repositories raises significant legal and compliance concerns

Microsoft's Response to the Findings

When contacted by Lasso's research team, Microsoft initially classified the issue as low severity, citing "low impact." However, following the disclosure, they implemented two immediate measures:

- Disabled public access to Bing's cached link feature

- Blocked access to the cc.bingj.com domain for all users

However, Lasso's researchers note that these measures are incomplete, as Copilot still maintains access to the cached data, even though human users cannot access it directly.

Recommendations from Lasso's Research

Based on their findings, Lasso's security experts recommend several key practices:

- Assume permanent exposure: Organizations must treat any public exposure of code as potentially permanent

- New attack surface awareness: Teams need to recognize LLMs and AI copilots as new vectors for data breaches

- Enhanced permission controls: Implementation of stricter permission systems and data access controls

- Security best practices:

- Maintain private repository status from inception

- Implement robust secret scanning

- Use official package repositories for private packages

- Deploy proper security controls before any public exposure

- Utilize git-secrets or similar tools

- Conduct regular security audits

- Implement immediate credential rotation protocols

Conclusion

This investigation by Lasso fundamentally changes how organizations must approach code privacy and security in the age of AI. The persistence of data in AI system caches means that temporary public exposure of code can have permanent consequences, regardless of subsequent privacy measures.

Want to help make AI systems safer? Join HackAPrompt 2.0 to discover and document AI vulnerabilities.

Valeriia Kuka

Valeriia Kuka, Head of Content at Learn Prompting, is passionate about making AI and ML accessible. Valeriia previously grew a 60K+ follower AI-focused social media account, earning reposts from Stanford NLP, Amazon Research, Hugging Face, and AI researchers. She has also worked with AI/ML newsletters and global communities with 100K+ members and authored clear and concise explainers and historical articles.